Beginning to use sonar, I would like to check in on the situation.

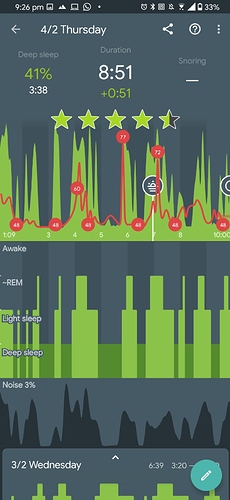

Please check my latest sleep tracking results, where Amazfit Bip S (accelerometer available) and sonar were used together. The topmost waveform is presumably the accelerometer input and the bottom one the sonar waveform. Between the two, peaks mostly align but their amplitude varies in some places. This is not surprising as the nature of the accelerometer measures acceleration and the sonar sensor probably measures displacement or velocity of some sort.

Now, I see above that the urbandroid teem does not think that implementing support for both sensors is important:

We are planning it in future, but to be honest, not very near future. Sleep tracking with real-time analysis is quite complicated even when we use only one source of data, so introducing two flows of data and two real-time estimation computing would be tricky and CPU demanding (hence battery demanding as well).

and that some show disagreement with having 2 data streams as input data. Note that input support is different from input analysis. Firstly, for input support: Question: Do we have the ability to capture sonar and accelerometer input at the same time right now? If not, when in the future? If we capture it, at least it can be uploaded to sleepcloud for some enthusiastic individuals to attempt to use these two input streams as an additional improvement source for accuracy. Secondly, for input analysis: (I’m not quite sure but sonar detects displacement/velocity while accelerometer definitely detects acceleration) Question: Isn’t it more accurate to directly get the displacement/velocity/acceleration from the proper sensor rather than having to differentiate or integrate it? Having analysis capability would be useful, regardless of the battery concern (users who use sonar probably have to charge their phone overnight, as “sonar drains the battery” says the support docs somewhere if memory serves.