Another request for this. Like others, this is what I assumed it was doing when I enabled the Wear OS watch and the sonar…

I also would be interested to use both tracking systems at the same time.

Guess this just isn’t happening for some reason. I still don’t understand why, it’s a no-brainer and would be more beneficial to most people than so many of the features that are being added to Sleep as Android.

Everyone interested in this possibility please ensure you voted this feature up if you haven’t already so hopefully it gets more visibility.

How can we Up-Vote it. Yesterday I got the go2Sleep sensor and I am highly interested in the O2 and heart-rate measurement (until now I used a Polar H7 which is somehow not comfortable always). However, I realized that after activating the wearable (Thirdparty in this case), the Sonar and/or the accelerator of my smartphone is not working anymore.

As the extension of go2Sleep does not include movement tracking right now, I highly would like to have this feature activated on my smartphone as I am sure that the measurement with movement is more accurate than without. Its a bit unlucky because right now I do have movement tracking using sonar + heart rate tracking using Polar H7. Using the go2Sleep sensor, I could use the hear-rate sensor of this device which is much more comfortable + Oxygen measurement but then the movement tracking is not available.

Thank you in advance,

Tobi

PS: If I could support you here, just get in contact with me.

Click the orange button that says, “Vote” in the upper left of this page.

As many have indicated, the ability to use HR data from a non Bluetooth smart wearable (but one that is already integrated) in conjunction with sonar or the sleep phaser (my preference) would be fantastic. Maybe all it needs is a checkbox/switch to ignore accelerometer data from the watch?

Honorary Vote since I’ve already used up my 5 vote limit (Why can’t we have more votes? ![]() ). The more accuracy the better, especially if one set of readings is suspected as an error, the other readings can help confirm or deny that.

). The more accuracy the better, especially if one set of readings is suspected as an error, the other readings can help confirm or deny that.

Though the user may need a fast-charger for their phones if they seem not to keep up with charging. Luckily I got one since before when my phone used to go dead despite being plugged in. It’s all good now.

I would really like to see this supported. I started using Sleep as Android years ago with just my phone, first accelerometer then sonar mode, then I bought a phaser for better tracking and light, and now I have a WearOS watch with the app installed. I was disappointed to learn that the wear app effectively made my phaser useless now. Instead of just manually selecting the tracking source for different types of data (movement, breathing, heart rate, etc), why not use all available data sources and combine them for higher accuracy? I don’t like having to choose between the phaser that I bought that can do movement & breathing, and the watch that I have now for movement & HR.

Is there any progress on this feature request? Seems like it has been open for nearly 2.5 years and still not implemented.

I’m no techy, but since you were open to ideas of how to get this combination of functions to be doable: Have a separate graph running parallel to the other(s) at the same time. All the available sensors chosen and added by the user can be rendered in their own track.

When the results are shown to the user, the visual graphs can be compressed to show them together, or it can be made clear that the user can drag downward or click an arrow to view the second set of readings below the first. Example: Accelerometer on top half of the screen, and sonar on the bottom half, plus the heart rate etc symbols/digits overlaid over whichever graph is shown on top.

Or alternatively, overlaying the two graphs together, with a dotted or striped pattern in an alternative color (chosen by the user in the Customization option) showing up on any areas where the two graphs differ from each other. Example: The user chooses which graph they want as Primary Sensor (Accelerometer or Sonar) and picks the color for that, which will be the most prominent color shown. Then for the Secondary Sensor, they’ll choose the color for the other. Then if there’s a discrepancy, if the Primary Sensor shows a vertically higher value (REM while the Secondary Sensor shows Light Sleep), it’ll show the perceived topmost segment with a striped or spotted version of itself. With Light Sleep on down still the solid Primary color. But if the Secondary Sensor has a higher value, then above the solid Primary colored graph, the segment piece will be drawn in the Secondary color (either dotted/striped or solid, whichever one users mostly agree on).

In the early testing phase, different things can be attempted to get what testers will agree seems accurate.

Or see what comes closest to results given by polysomnograph if anyone can test with one (Though I’m not sure how hard or easy that is to access one).

Or a possibly easier method if one has two of the same phone model, and uses the app with sonar on one and accelerometer on the other (and/or all the other input methods users have). Then converting the readouts into rough chunks (numerical or otherwise) based on timestamps, and importing them to a document reader that can compare the two, highlighting areas that don’t match so you can see how negligible or noteworthy the discrepancies are.

-

Prioritizing the results of whichever reading seems to be more accurate.

-

Calculating an average between all the readings.

-

Or if that doesn’t seem to be enough, digging deeper to figure out why those differences occur, and using your resources to tweak the way each are interpreted by the current calculators until they seem to match, both with each other and reality. A more difficult undertaking, but beneficial in the long run if averaging doesn’t seem to work.

Easier said than done, definitely. But accuracy adjustments should be expected from this app over time anyway. This would potentially provide a means for the devs to accomplish that by having the perspectives of multiple tools giving their input, like group brainstorming. ![]()

Also wanting to give my honorary vote to this, i’d love to see this feature implemented i wanted to use breathing rate from sonar and heart rate from miband i was pretty disappointed when i found out it doesn’t work..

I thought I would let others in this thread know, I had emailed Urbandroid about this also and got this reply:

We are planning it in future, but to be honest, not very near future. Sleep tracking with real-time analysis is quite complicated even when we use only one source of data, so introducing two flows of data and two real-time estimation computing would be tricky and CPU demanding (hence battery demanding as well).

So hopefully they will eventually add support for this.

I don’t think multiple streams of similar sensors makes sense for this application. It’s main function is real time sleep stage estimation to work out when to kick off a smart alarm, not really post sensor analysis as you would run in a sleep study, though there is some of that in the app as well. However, selecting which sensor to use for actigraphy and a different sensor for HR should be no more challenging than chosing a phaser and a bt smart heart rate sensor. We can already do this. I run a Sleep Phaser and a mio slice bt wrist hr monitor (bt smart) and it makes a big difference to the sleep stage estimates.

What I would really love is to use a wellue o2ring or a go2sleep ring for the hr and spo2 data only and the sleep phaser for movement. Right now I have to record spo2 and hr via the o2ring to its own app and wear the mio slice for saa but correlating post sleep is not that easy and saa is not capturing spo2 data. Also wearing two devices to extract the same data is not the most pleasant experience.

Seems like they could at least allow both to be tracked initially on the graphs which doesn’t seem like much of an ask since it already does this, but you have to choose one or the other. If the analysis part is complicated and such, then okay, you would have to choose one or the other for that part. But it would be nice to see both heart rate and breath rate tracked on the same night for now.

I don’t understand the battery issue limitation when the vast majority of people in the world charge their phone over night. Am I missing something as that being a limitation? And why would anyone care about it being CPU demanding too? Aren’t they sleeping (or trying to sleep?).

Possible partial work around. Don’t connect your wearable to sleep as android but sync it to google fit. Use sonar for sleep tracking. When you wake up sync wearable data to google fit, go back to SaA and sync services. Your heart rate data will be added to your sleep graph.

Beginning to use sonar, I would like to check in on the situation.

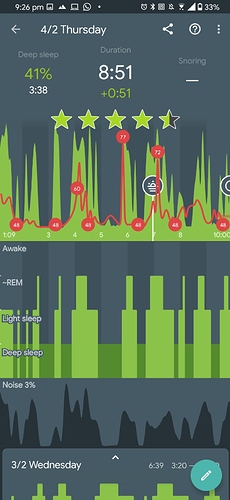

Please check my latest sleep tracking results, where Amazfit Bip S (accelerometer available) and sonar were used together. The topmost waveform is presumably the accelerometer input and the bottom one the sonar waveform. Between the two, peaks mostly align but their amplitude varies in some places. This is not surprising as the nature of the accelerometer measures acceleration and the sonar sensor probably measures displacement or velocity of some sort.

Now, I see above that the urbandroid teem does not think that implementing support for both sensors is important:

We are planning it in future, but to be honest, not very near future. Sleep tracking with real-time analysis is quite complicated even when we use only one source of data, so introducing two flows of data and two real-time estimation computing would be tricky and CPU demanding (hence battery demanding as well).

and that some show disagreement with having 2 data streams as input data. Note that input support is different from input analysis. Firstly, for input support: Question: Do we have the ability to capture sonar and accelerometer input at the same time right now? If not, when in the future? If we capture it, at least it can be uploaded to sleepcloud for some enthusiastic individuals to attempt to use these two input streams as an additional improvement source for accuracy. Secondly, for input analysis: (I’m not quite sure but sonar detects displacement/velocity while accelerometer definitely detects acceleration) Question: Isn’t it more accurate to directly get the displacement/velocity/acceleration from the proper sensor rather than having to differentiate or integrate it? Having analysis capability would be useful, regardless of the battery concern (users who use sonar probably have to charge their phone overnight, as “sonar drains the battery” says the support docs somewhere if memory serves.

The reason I would like the two to be combined, is for the sole purpose of being able to improve awake detection. Being able to compare data from sonar breath rate tracking, along with movement and heart rate detection from a smartwatch, would make for the best possible detection you can get at home for actual awake/asleep times, without having to go to a lab to do a sleep study.

Hi, I’m wondering if this feature has ultimately found its way into the product backlog.

In my experience, tracking using Sonar, Accelerometer, and Wearables produce significantly different hypnograms, resulting in vast discrepancies in estimated sleep duration.

Hard to say which one to trust to.

I believe, integrating data from different sensors would help improve tracking, especially if machine learning were used.

Moreover, having accumulated years of data from thousands of devices, some data science and machine learning might help improve data interpretation.

Hi @Immortal , if you have vastly different sleep durations detected depending on the sensor you use, the awake detection might have trouble in your environment. Ideally, use the Left ≡ menu → (?) Support → Report a bug, right after your sleep duration does not reflect reality. I will check, which awake class needs fine-tuning.

In some cases, using the sonar can trigger “phone’s activity” data in the system, which leads to overestimating the awake times with sonar.

The data used for the algorithms behind the sleep analysis were trained on data sets from sleep labs (with access to actual EEG data). Data science and machine learning were indeed engaged to achieve the highest possible accuracy.

The app uses one source of data for technical reasons; using more sources completely drained the battery on most phones in just few hours.